Repeated cough or just a tickly throat? Safer at home, or better off at school– it’s hard to decide. Tough decisions are confronting us just when the evidence we need is so patchy. The immediacy of the pandemic means the usual conditions for decision-making no longer apply. Conversation with friends may soothe our frayed nerves, but we’re left with anecdote and amateur theorising – useful but hardly definitive.

Image by wayhomestudio

Aggravating as the present circumstances are, it’s not only in pandemics that we are forced to make difficult choices: it’s an all too familiar feature of everyday life. Fortunately it’s also a major focus for scientific research in the fields of experimental psychology and neuroscience. What is it we can learn from studies of such everyday acts?

Decision-making

Economics, since the 17th century, has offered a simple view that you weigh up the pros and cons of each choice and multiply by the chance of it happening. It’s a bit of a pain carrying an umbrella to work but if the chance of rain is high, you take one; if it’s low, you don’t bother. More sophisticated theories also take account of how well you cope with risk and what your individual preferences are.

But however smart such theories are, they are based on the idea that we humans always weigh things up rationally. This assumption may make the maths easier for the economist, but you don’t need a degree in psychology to know this isn’t always true. It’s really quite recently that research on our decision-making behaviour has moved onto a more practical level, through the use of experiments. Psychology researchers set up games or situations that simulate real life events and study how people react. This kind of research has thrown up a number of interesting findings, that are firm enough to be replicable whenever they are done – a hallmark of sound science.

Heuristics

The Nobel Prize was awarded to Daniel Kahneman for work of this kind…. and much more. With Amo Tversky he developed a theory that combined insights from both psychology and economics. They identified ways in which we cope with decision-making by using heuristics and biases to simplify the process. Heuristics are simple strategies or mental processes we use to reach decisions or make judgements … known colloquially as rules-of-thumb. Various kinds of heuristic have been identified by psychologists, many of which we will recognise in our personal lives. If we are trying to work out the rough price of something or the area of wall we need to paint, we may round up the numbers to make calculation easy – 6 bread rolls at 48p each is roughly 6 x 50p or £3: a rule of thumb. An educated guess is another example, in which you use past experience to make a reasonable guess without investing too much time and effort in researching carefully.

Common sense is another type of heuristic in which we invoke our personal past experience. It’s appealed to most frequently when right and wrong seem relatively clear cut to us. Another well-known short-cut is believing someone just because they are in authority – the power of the white coat – as in the example of the Milligram experiment described below.

Image reproduced by kind permission of Dan Piraro

Biases

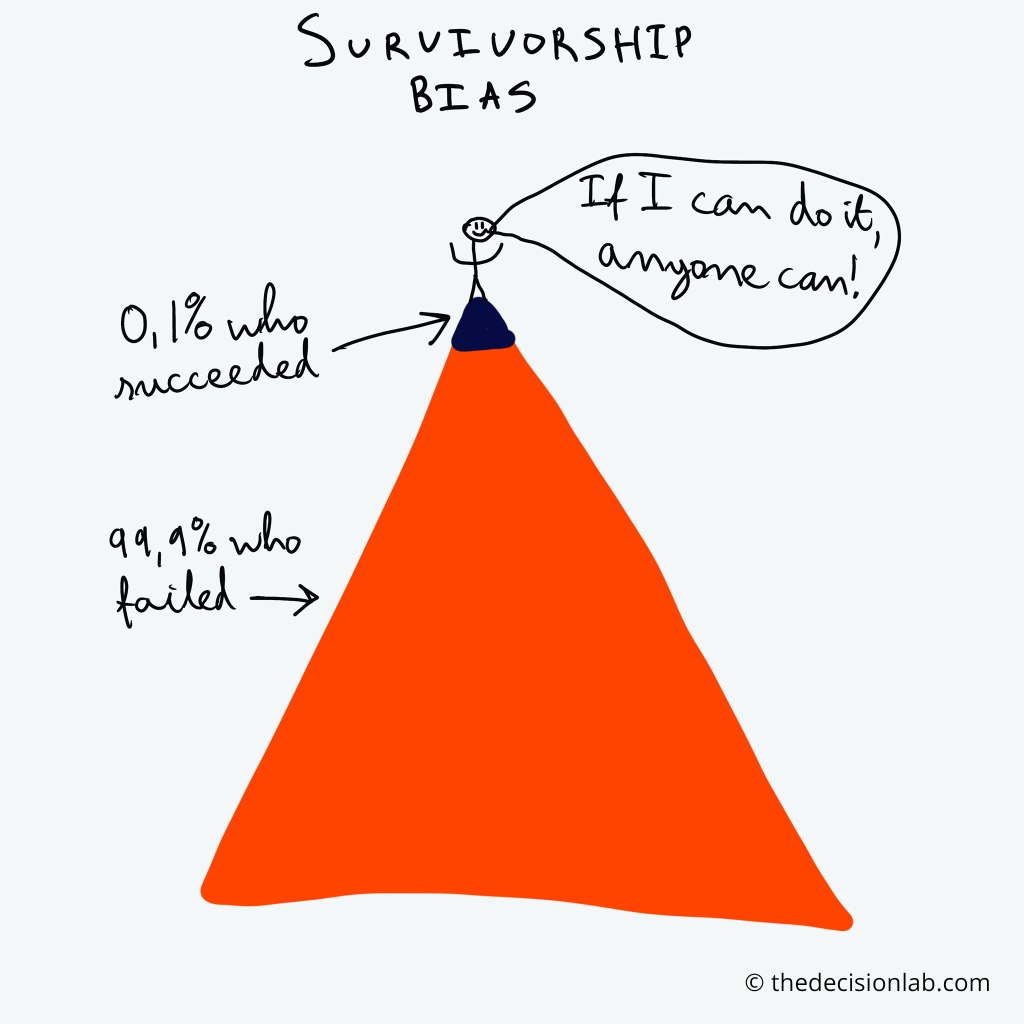

Heuristics are clearly absolutely essential ways of getting through the complexities of real life without collapsing from mental exhaustion. However, they can lead all too easily to bad judgements and decision-making. Psychologists have studied these tendencies in experiments that simulate real situations. They find various ways in which errors, biases and deceptions creep in.

Image courtesy of thedecisionlab.com

One way is by registering a piece of information too strongly just because it came first. For example, a salesperson may show you an expensive item first, so when you are shown something less expensive it seems more affordable. Another kind of error is overestimating whether something is likely to occur just because we remember cases that stick in our mind – like plane crashes or shark attacks.

We are also inclined to judge risks according to our feelings, even if this goes against the facts. An interesting study of the risk from radiation showed that we, the general public, rate the risk from nuclear power waste as very high and that from medical X-rays as low, whereas the majority of radiation experts see it the other way round. A consequence of this bias is that the cost of interventions aimed at saving lives may be out of proportion to the benefits. For example, one study estimates the average cost of saving one year of life in the nuclear industry is around $100 million, whereas by making seat belts compulsory an equivalent saving of lives was estimated to have cost a mere $69.

Our feelings introduce another important bias discovered through experiments using small amounts of money to simulate real life transactions. It is found that, when weighing up options, we tend to be more negatively affected by losing something than we are positively affected by gaining something equivalent.

Framing

A different kind of influence on our decision-making comes from the way things are framed for us. In a classic experiment Kahneman and Tversky organised two groups of people in an experiment about taking a medical decision. The first group was asked to choose between two treatments for a deadly disease. One treatment would save 200 lives out of 600; the other offered a 1 in 3 chance that everyone would be saved but a 2 in 3 chance none would be. Most people decided that the 200 people should be saved – the less risky option. A different group of people were told the same information but framed differently – losing 400 lives rather than saving 200 and a 2/3 chance that all would die. With this more negative framing, the majority of this group chose to take the high risk, with the slender chance of preventing all the deaths.

The upshot of this kind of research and the kind of behavioural science that is developing from it, is that it can’t be right to assume we all act rationally when we make decisions. We have evolved to use short cuts that introduce bias, error and deception…. but, on balance, that’s better than pure guesswork!

Choosing wisely

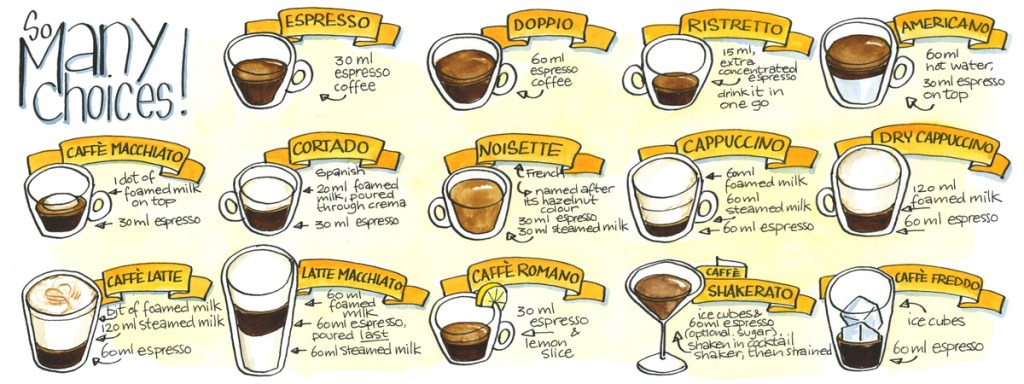

Once we become aware of quirks in the way we make decisions, we are better placed to make good decisions. Research undertaken in Amsterdam showed that sometimes making a snap judgement can be better than deliberating carefully. Too much information can be a problem in situations like picking a holiday destination or dishes from a menu. The researchers concluded that it’s better to think things through for simple choices, but for complex decision-making, less analytical thinking may be preferable.

Any of us may have witnessed a situation where someone persists with a choice even after they know it’s not right – they’re so far invested in it. Studies at Ohio state university indicate that this happens because we feel more committed the more we invest. Sometimes it’s better to just let the past go – to stop throwing good money after bad.

Many studies reveal that almost all of us are susceptible to peer pressure. The famous Milgram study at Yale University in the 1960s caused shock waves by demonstrating how far volunteers were prepared to go in administering electric shocks to a (stooge) victim if a figure of authority in a white coat told them to do so. Apparently a fatal plane crashed in the East Midlands in the UK occurred after a member of the cabin crew, though realising the captain had shut down the wrong engine, decided not to question his authority. Being aware of the pitfalls of peer pressure helps with better decision-making.

In today’s world it’s easy to be overwhelmed by excessive choices – particularly in the coffee department.

Image by kind permission of Koosje Koene

A psychologist in Pennsylvania investigated students’ job choices on leaving university. They found, as you might expect, that those who looked thoroughly at every choice moved on to higher salaries than those who just had a ‘good enough’ approach. However, these same higher earners tended to be less satisfied with the choice they had made.

Resisting evidence

Ignoring evidence, whether scientific, legal or personal, is clearly a common aspect of everyday life. We’re all reluctant to cut back on pleasures even when the evidence of their damaging effects is plain to see. It’s not easy to give up smoking, or to cut back on red meat or continental holidays, however aware we are of the research. It’s even more tricky when the evidence runs against our personal or commercial interests.

Research in psychology reveals a further basis for resistance: cognitive dissonance – the theory that people try to avoid inconsistencies in their understanding or beliefs. This avoidance reaction can lead us to ignore unwelcome information, deny it or shut it away in a discrete part of our minds (“compartmentalising” it).

A classic example of professional denial is the case of handwashing. Ignaz Semmelweis was laughed at and ignored by his colleagues when he argued in 1850 that washing hands by medical staff could lower the rate of hospital acquired-infections. What seems common sense to us today ran counter to prevailing ideas about disease at the time. Perhaps the most egregious example of denial for commercial reasons in recent times was the effort made by the tobacco industry to cover up harm from smoking. It commissioned pieces of so-called ‘independent’ research with the aim of creating conflict and sowing confusion.

Widespread denial of science by the public was revealed by a Gallup survey as recently as 2017. It found that about 38 percent of adults in the United States deny evolutionary science believing that “God created humans in their present form, at one time within the last ten thousand years.” A 2015 study at Yale university found denial to be linked mainly to political and ideological conservatism rather than to ignorance of the scientific facts.

Image courtesy of James MacLeod macleodcartoons@gmail.com

Clearly the consequences of rejecting scientific evidence about handwashing, smoking or climate change are very serious. They put the health of individuals, communities and indeed the planet in jeopardy. Yet avoiding unwelcome evidence is a normal aspect of being human and indeed, to some extent, necessary. A study in nursing found that self-deception can help protect vulnerable patients by distorting reality in a self-enhancing way. It can enhance their sense of control, lower levels of anxiety and enhance decision-making when stressed – the benefit of seeing the world through rose-tinted spectacles, as the researchers put it.

Patchy and dodgy evidence

Even experts can run into difficulties when the evidence is patchy. In such circumstances they tend to move from the established facts to exercising their judgment, according to an article by a specialist in risk analysis. This works well enough when judgments can be tested quickly, as in forecasting the following day’s weather. But in uncharted territory, when feedback from the real world is not available so immediately, experts will be in a similar position to the rest of us. In the current pandemic, the ups and downs of virus mutations and social behaviour patterns are testament to this.

Worse than patchy evidence is deliberate misinformation. Clearly this is regularly put about by individuals, and organisations and its reach is being dramatically extended, thanks to social media. Ethically-motivated organisations and individuals now have to work even harder to ensure their communications are as effective and far reaching as possible. A good example of this is the Zoe/Kings College London Symptom Tracker study which has attracted over 4 million participants who are reporting daily on their symptoms and experiences during the pandemic. It publishes its findings openly through its millions of users. One of its recent studies looked at the reasons people give for their reluctance to accept the vaccine. It found these to be only marginally associated with people’s ideological or religious beliefs but overwhelming linked to their ignorance of the facts and fears they perceive about possible consequences – an open door for false information. It is interesting that this seems to run counter to the findings of the 2015 Yale study mentioned three paragraphs back.

Conclusion

Having looked at a number of studies about the actual ways in which we make decisions, it is clear we are not simply the rational beings imagined by classical economists. We are liable to bias, prejudice and denial. In many ways these tendencies are essential for our survival, bombarded as we are by excess stimuli and pressures. On the other hand, they can also cloud our reason and lead us to make poor choices and damaging decisions. Science itself aims to minimise the effects of bias and prejudgment. The insights it offers into the decision-making process may not always save us from ourselves, but they can at least put us on the lookout for our errors…. and help us make allowances for them.

© Andrew Morris 17th February 2021

If you would like to follow up any of the research cited above, contact me for references.